极智项目 | YOLO11目标检测算法训练+TensorRT部署实战

- 项目获取:https://download.csdn.net/download/weixin_42405819/89930107

- 项目作者:极智视界

- 项目init时间:20241001

- 项目介绍:对YOLO11基于coco_minitrain_10k数据集进行训练,并使用py TensorRT进行加速推理,包括导onnx和onnx2trt转换脚本

- 项目参考:YOLO11部分参考 => https://github.com/ultralytics/ultralytics (这是YOLO11算法的官方出处)

2. 算法训练

(1) 数据集整备

数据集放在 datasets/coco_minitrain_10k数据集目录结构如下:

datasets/└── coco_mintrain_10k/├── annotations/│ ├── instances_train2017.json│ ├── instances_val2017.json│ ├── ... (其他标注文件)├── train2017/│ ├── 000000000001.jpg│ ├── ... (其他训练图像)├── val2017/│ ├── 000000000001.jpg│ ├── ... (其他验证图像)└── test2017/├── 000000000001.jpg├── ... (其他测试图像)

(2) 训练环境搭建

conda creaet -n yolo11_py310 python=3.10conda activate yolo11_py310pip install -U -r train/requirements.txt

(3) 推理测试

先下载预训练权重:

bash 0_download_wgts.sh执行预测测试:

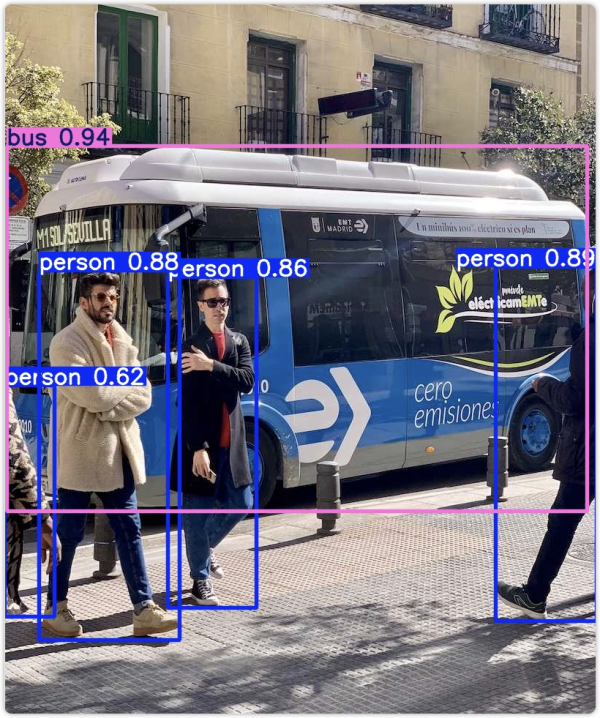

bash 1_run_predict_yolo11.sh预测结果保存在 runs 文件夹下,效果如下:

(4) 开启训练

已经准备好一键训练肩膀,直接执行训练脚本:

bash 2_run_train_yolo11.sh其中其作用的代码很简单,就在 train/train_yolo11.py 中,如下:

# Load a modelmodel = YOLO(curr_path + "/wgts/yolo11n.pt")# Train the modeltrain_results = model.train(data= curr_path + "/cfg/coco128.yaml", # path to dataset YAMLepochs=100, # number of training epochsimgsz=640, # training image sizedevice="0", # device to run on, i.e. device=0 or device=0,1,2,3 or device=cpu)# Evaluate model performance on the validation setmetrics = model.val()

主要就是配置一下训练参数,如数据集路径、训练轮数、显卡ID、图片大小等,然后执行训练即可

训练完成后,训练日志会在 runs/train 文件夹下,比如训练中 val 预测图片如下:

这样就完成了算法训练

3. 算法部署

使用 TensorRT 进行算法部署

(1) 导ONNX

直接执行一键导出ONNX脚本:

bash 3_run_export_onnx.sh在脚本中已经对ONNX做了sim的简化

生成的ONNX以及_simONNX模型保存在wgts文件夹下

(2) 安装tensorrt环境

直接去NVIDIA的官网下载(https://developer.nvidia.com/tensorrt/download)对应版本的tensorrt TAR包,解压基本步骤如下:

tar zxvf TensorRT-xxx-.tar.gz# 软链trtexecsudo ln -s /path/to/TensorRT/bin/trtexec /usr/local/bin# 验证一下trtexec --help# 安装trt的python接口cd pythonpip install tensorrt-xxx.whl

(3) 生成trt模型引擎文件

直接执行一键生成trt模型引擎的脚本:

bash 4_build_trt_engine.sh正常会在wgts路径下生成yolo11n.engine,并有类似如下的日志:

[10/02/2024-21:28:48] [V] === Explanations of the performance metrics ===[10/02/2024-21:28:48] [V] Total Host Walltime: the host walltime from when the first query (after warmups) is enqueued to when the last query is completed.[10/02/2024-21:28:48] [V] GPU Compute Time: the GPU latency to execute the kernels for a query.[10/02/2024-21:28:48] [V] Total GPU Compute Time: the summation of the GPU Compute Time of all the queries. If this is significantly shorter than Total Host Walltime, the GPU may be under-utilized because of host-side overheads or data transfers.[10/02/2024-21:28:48] [V] Throughput: the observed throughput computed by dividing the number of queries by the Total Host Walltime. If this is significantly lower than the reciprocal of GPU Compute Time, the GPU may be under-utilized because of host-side overheads or data transfers.[10/02/2024-21:28:48] [V] Enqueue Time: the host latency to enqueue a query. If this is longer than GPU Compute Time, the GPU may be under-utilized.[10/02/2024-21:28:48] [V] H2D Latency: the latency for host-to-device data transfers for input tensors of a single query.[10/02/2024-21:28:48] [V] D2H Latency: the latency for device-to-host data transfers for output tensors of a single query.[10/02/2024-21:28:48] [V] Latency: the summation of H2D Latency, GPU Compute Time, and D2H Latency. This is the latency to infer a single query.[10/02/2024-21:28:48] [I]&&&& PASSED TensorRT.trtexec [TensorRT v100500] [b18] # trtexec --onnx=../wgts/yolo11n_sim.onnx --saveEngine=../wgts/yolo11n.engine --fp16 --verbose

(4) 执行trt推理

直接执行一键推理脚本:

bash 5_infer_trt.sh实际的trt推理脚本在 deploy/infer_trt.py推理成功会有如下日志:

------ trt infer success! ------推理结果保存在 deploy/output.jpg

如下:

来源:极智视界

好文章,需要你的鼓励

Glean年收入突破3亿美元,削减AI成本成核心卖点

企业AI搜索公司Glean宣布年度经常性收入(ARR)达3亿美元,较15个月前的1亿美元增长三倍。尽管谷歌、微软、OpenAI等科技巨头纷纷入局企业AI搜索市场,Glean凭借"上下文图谱"技术深度理解企业业务需求,并帮助客户显著降低AI计算成本。该公司提供按用量计费和混合定价两种模式,客户涵盖Databricks、Reddit、Pinterest及三星等企业。Glean上轮融资后估值达72亿美元。

香港中文大学与MiniMax联手破解AI图像描述的“说多错多、说少漏多“困局

香港中文大学与MiniMax提出ClaimDiff-RL框架,将图像描述的AI训练从整体打分升级为逐条核查,有效解决了传统方式导致AI"少说保平安"的问题,同时在多项基准测试上超越Gemini-3-Pro-Preview。

蓝色起源“新格伦“火箭在佛罗里达测试中发生爆炸

杰夫·贝索斯旗下的蓝色起源公司在佛罗里达卡纳维拉尔角进行静态点火测试时,新格伦重型火箭发生爆炸。这是美国历史上最大规模的火箭爆炸之一,也是蓝色起源公司遭遇的最严重失败。所有人员安全,但该事故可能导致新格伦火箭项目长期暂停。此前该火箭已成功完成三次发射,并实现了助推器回收和重复使用。

NTU、HKU等多所顶校联手,让AI同时“多角度看片“——视频理解的并行探针革命

ParaVT是一个由南洋理工等多校联合提出的并行视频工具调用框架,通过让AI同时分析多段视频并引入PARA-GRPO算法解决训练中的格式崩溃与工具跳过问题,在六项长视频理解测试中平均提升约7.9%。